I’m Jiyeong Oh,

I’m Jiyeong Oh, an AI engineer working across perception, robotics, and healthcare. I build systems that take signals from cameras, sensors, and wearables and turn them into something a robot or edge device can act on reliably, in real conditions, not just controlled tests.

My background spans deep learning research and production engineering. I’ve trained vision and 3D perception models, deployed low-latency inference pipelines, and published in human-robot interaction and digital health. A lot of my work sits at the boundary between visual perception and physiological data: how those two kinds of signals can be combined to make robotic systems more responsive and trustworthy when they’re operating around real people.

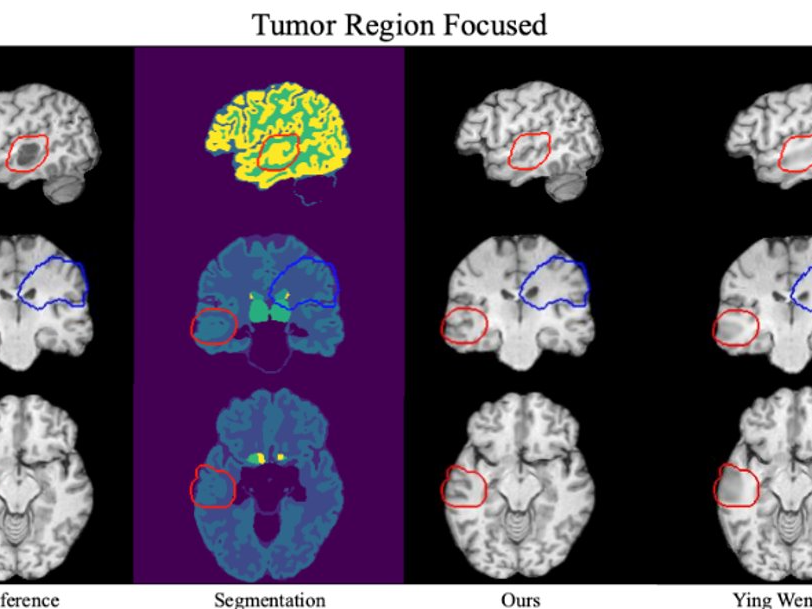

I’ve built companion robots for dementia and autism care, generative inpainting models for 3D medical imaging, and multi-agent control systems for autonomous racing. The domains are different but the question driving all of it is the same: what does it actually take to make AI dependable outside the lab, in environments that don’t stay clean and predictable?

That’s the problem I want to keep working on. Interested in connecting with people building AI that has to work in the physical world.

Research Areas

Education

Awards

Robotic Perception & Control (Planning, Optimization, Sensor Fusion)

Computer Vision, Medical AI

Wearable Sensing, Biomarkers

Human-Robot Interaction

Indiana University

Bloomington, IN, USA

M.S. in Data Science ’26Hanyang University

Seoul, Korea

B.S. in Data Science

Fulbright U.S.-Korea Presidential STEM Initiative Scholar

Publications

Casey C. Bennett, Cedomir Stanojevic, Jiyeong Oh, Junyeong Ahn, Yeeun Jeon, Kanghee Son, and Nikki M. Abbott (2024) “Digital health sensor data in autism: Developing few shot learning approaches for traditional machine learning classifiers.” IEEE-EMBS International Conference on Body Sensor Networks (BSN). In Press.

🔗 paperBennett, C. C., Šabanović, S., Stanojević, C., Henkel, Z., Kim, S., & Lee, J. (2023). Enabling robotic pets to autonomously adapt their own behaviors to enhance therapeutic effects: a data-driven approach.In IEEE International Conference on Robot and Human Interactive Communication (RoMAN).

🔗 paperOh, J. Y., & Bennett, C. C. (2023, March). The Answer lies in User Experience: Qualitative Comparison of US and South Korean Perceptions of In-home Robotic Pet Interactions. In Companion of the 2023 ACM/IEEE International Conference on Human-Robot Interaction (pp. 306-310).

🔗 paper 🎥 videoJiyeong Oh, Eunseo Yoon, Mingon Jeong & Jaehoon Kim. (2023). Comparison Study of User Experience on Universal Design in Virtual Reality Locomotion. Human-Computer Interaction Korea Conference (HCI Korea), 1228-1231.

🔗 paperBennett, C. C., Stanojevic, C., Kim, S., Lee, J., Yu, J., Oh, J., … & Piatt, J. A. (2022, August). Comparison of in-home robotic companion pet use in South Korea and the United States: a case study. In 2022 9th IEEE RAS/EMBS International Conference for Biomedical Robotics and Biomechatronics (BioRob) (pp. 01-07). IEEE.

🔗 paper